Hello. I have a Kobo toolbox project linked to my inbox using the REST services webhook. Submissions are in my inbox. I would like to create a job that’s triggered by a weekly cron trigger to push all the week’s data into BigQuery. I don’t see an adaptor and very new to OpenFn. Please help. Thanks

Hi @kwesta , there’s an OpenFn adaptor for big query here (GitHub - OpenFn/language-bigquery: A language-package for working with Google's BigQuery API) but I see that a new release hasn’t been cut in some time.

Do you have some specifics around the request?

@mtuchi , do you think you could migrate this one over and help @kwesta get his job set up?

Hello @kwesta , i am migrating the adaptor to our adaptors monorepo (Github - OpenFn/adaptors),

When the release is done you will be able to use a helper function called load()

Here is an example of how it can be used

@example Load data to a table in bigquery from a CSV. See full

load(

'/path-to-your.csv',

'awesome-solutions-project', // project

'test01', // dataset

'mytable', // table

{

schemaUpdateOptions: ['ALLOW_FIELD_ADDITION'],

writeDisposition: 'WRITE_APPEND',

skipLeadingRows: 1,

}

);

Meanwhile, you can try implementing the job with language-http adaptor adaptors/packages/http at main · OpenFn/adaptors · GitHub

For Google Bigquery you can use their rest API to insert data into BigQuery

Method: datasets.insert | BigQuery | Google Cloud

Let me know how i can further assist you if you face any blocker

Thanks @mtuchi and @taylordowns2000 for the quick replies.

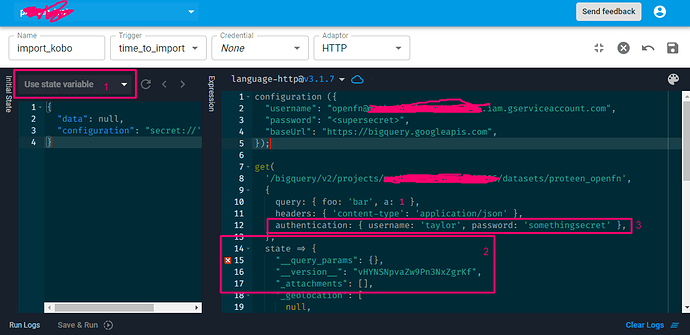

Since load() is not yet ready, you’ve suggested I used the http adaptor. Unfortunately I’m not very familiar with Javascript auth, but I don’t see how to write the authorization for my service account. Sorry in advance if these are very simple questions

I have 3 questions so far;

-

I don’t know what the “Initial state” section is for or how to use it. From the tutorial videos on Youtube, it appears to be for testing/authentication. Please advise

-

I don’t know how to insert authentication variables for my Google service account to receive the API requests.

-

The documentation for the http adaptor shows a configuration variable but I’m not sure how to update that for a service account (related to 2 above).

-

I already have sample data in my inbox, so that’s my starting point. There is already a BigQuery dataset and empty table on my account waiting to be populated.

Thanks again.

Hello @kwesta,

If your writing this job on openFn platform the first thing you need to configure is your credentials

In your case you need the following credentials to be set

inboxUrlthis isopenFninbox URL, we will use this URL to fetch data and put them in your initial state for the next operation- Credentials for Google Bigquery

accessToken// BigQuery Access TokenapiKey// BigQuery API KEYprojectId, // BigQuery projectIdbaseUrl// https://bigquery.googleapis.com/bigquery/v2/projects/

Then create a RAW JSON credential on openfn.org

:-> https://docs.openfn.org/documentation/build/example-build#3-create-credentials-to-connect-your-destination-system

Make sure your crendetials schema look like this

{

inboxUrl: "blablabla",

accessToken: "blablabla",

apiKey: "blablabla",

baseUrl: "blablabla",

}

Initial state

So based on your early description looks like your initial state is from the inbox

So the first operation would be to get that data from the state

get(`state.configuration.inboxUrl`, null, (state) => {

const dataFromKobo = state.data;

return { ...state, dataFromKobo };

});

Authentication from Google & Bigquery insert data

Google has a detailed instruction on how to use their Rest API

:-> Method: datasets.insert | BigQuery | Google Cloud Documentation

I recommend to use their API explorer on the right side panel to test and see the results

Here is an example on how to create an empty Dataset

curl --request POST \

'https://bigquery.googleapis.com/bigquery/v2/projects/[PROJECTID]/datasets?key=[YOUR_API_KEY]' \

--header 'Authorization: Bearer [YOUR_ACCESS_TOKEN]' \

--header 'Accept: application/json' \

--header 'Content-Type: application/json' \

--data '{}' \

--compressed

Using our http adaptor can be used like this

post(`{state.configuration.baseUrl}${state.configuratin.projectId}/datasets`, {

query: { key: state.configuration.apiKey },

headers: {

accept: "application/json",

"content-type": "application/json",

Authorization: "Bearer state.configuration.accessToken",

},

data: {

...state.dataFromKobo,

},

});

I hope that gives you an idea on how you can use the http adaptor to get data from your inbox then insert them in Bigquery

Feel free to book a quick meeting with me :-> Calendly if your still stuck